@vhoang Thanks for the fix.

@JocelynUrvoy Glad to know you got it to work, and thanks for sharing your progress with us. Yes, I second your interpretation of Daylight Autonomy as ‘Daytime’ Daylight Autonomy. I especially like your idea of trimming down the .ill files of night hours.

@OlivierDambron Yes, this component is not so effective for big files. I have already pushed it to the limit and it just completely fails above 40-50 points, even on a 64GB RAM system. In fact, grasshopper crashes out after utilizing about 50 GB of RAM, with another 14GB still left to spare. This is a very temporary solution, as Mostapha hopes to release the more elegant SQL database implementation for result management soon.

@JocelynUrvoy and @MohammadHamza

I wonder what would happen if we trim the Night hours and later set Occupancy hours for annual metrics that go beyond at certain time of the year. (the fixed occupancy of 8-20 in winter when sun sets at 17).

Would it be possible to calculate DA[300] for daytime hours ?

@OlivierDambron

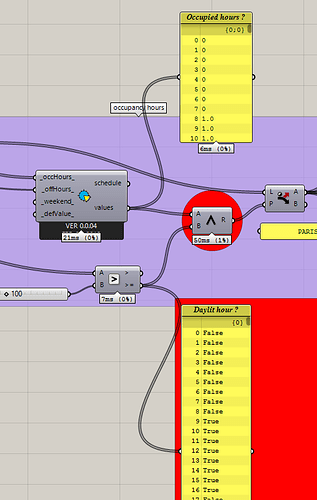

For that I use the “AND” gate between the occupied hours and the daytime hours in the script I attached.

Circled in red below

Hello everyone,

The link that @JocelynUrvoy has shared is now expired! Can anyone share with me the result of a simulation with couple of 1000 points. I’m testing the performance of the new implementation and I want to see how good/bad it will work against larger data-sets.

I will share a code sample here soon (in a couple of days) so you can also test it on your side.

Thanks!

Hi @mostapha

here’s a new link with the same content (this was before this fix How to load the results from a file into Grasshopper using Honeybee[+] API?)

It’s 1700 points.

I’ve got some heavier files if you prefer (25k points), just let me know.

Looking forward to test the new version !

Thanks Mostapha

Hi @JocelynUrvoy,

Thanks! I don’t actually need the Grasshopper file. Well, I will only need the list of hours if you didn’t run it for the whole year but that is fine. I can just put some random hours. If possible I will need the 3 ill files in the results folder. In the new workflow the final results will be generated inside the database from the 3 other calculations.

Let’s go for 25K points. This one was loaded to database successfully and I could process it with no issues but it was a little bit of cheating as I was only loading the final results.

Hi Mohammad,

does this still work with HB[+] version 0.0.05?

In my case, the component will output the following error:

- Solution exception:Multiple targets could match: int(type, IList[Byte]), int(type, object), int(type, Extensible[float])

I was not getting the error with 0.0.04. Any ideas as to why?

This should fix the issue:

You can also avoid the issue by passing the start line number:

Change

analysisGrid = AnalysisGrid.from_file(_pts_file)

to

analysisGrid = AnalysisGrid.from_file(_pts_file, 0)

2 Likes

Yes, this fixed it, thank you!

hi @mostapha

Thanks a lot!

The .ill and .pts files are obviously flattened. I didn’t manage to re-split/re-organize the results with the same tree structure with multiple analysisgrids, is there a way to retrieve that from the recipe? I wonder where the gridnames are stored.

- didn’t manage to use AnalysisGrid.name

- I was not able to use the start_line and end_line correctly so as to split the results using consecutive domains, given that I know the length of results I am trying to obtain.