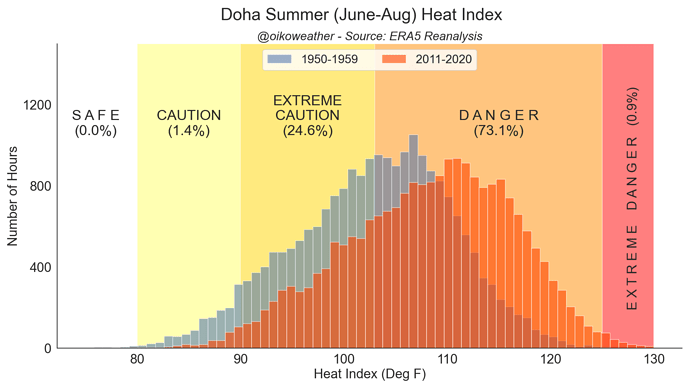

The scary thing about climate change for building designers might be that the future climate for the design life of buildings (30~50 years?) is really not predictable with any measure of reliability. NOAA recently released a new ‘30-year climate norm’ and even this concept of 30-year average weather is being challenged and perhaps rightly so. For instance, here’s the heat index change for Doha over the last 70 years that looks rather alarming:

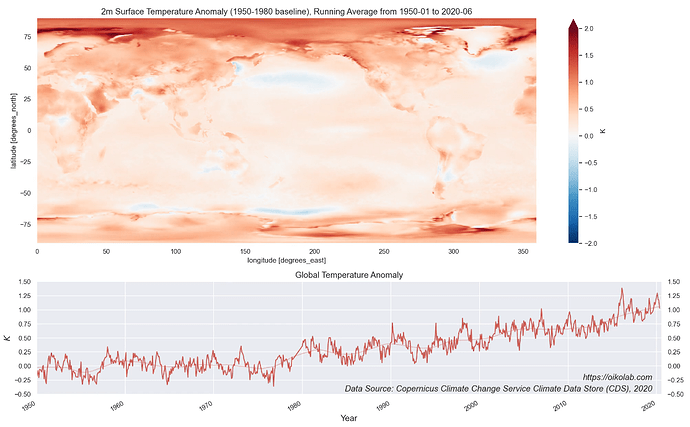

But this isn’t happening uniformly as you can see below and to complicate things more, there are seasonal variations in the changes too. For instance, if you meet someone from Alabama who doesn’t believe in global warming given their anecdotal experience, it’d actually be quite understandable.

Add to this all the different emission scenarios and with various climate models, I’m wondering if the idea of TMY needs be updated somehow also.