Hi all,

Last month @FrederikRasmussen and I finished our short post-graduate internship at Henning Larsen Architects. During our internship we got the chance to work with Ladybug Tools. A result of this is the following 3PH imagebased recipe. This should not be seen as a final solution for this recipe, and has only been used on two small case models, but we wanted to share it with the Ladybug community – so feel free to make it better or report errors if you decide to test it.

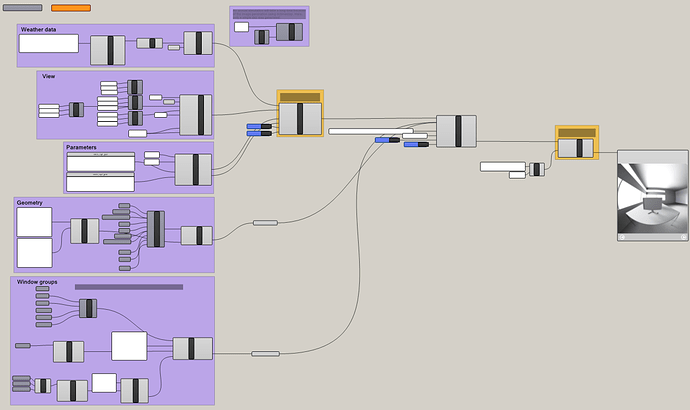

Workflow

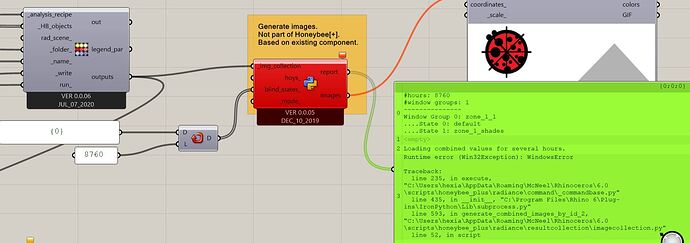

Below is an overview of a simple workflow, with the most simple post-processing, i.e. combining the different window group combinations into one image per hour. The only components that are not part of Ladybug Tools are the two grouped in orange. More details on these below.

Components and code changes

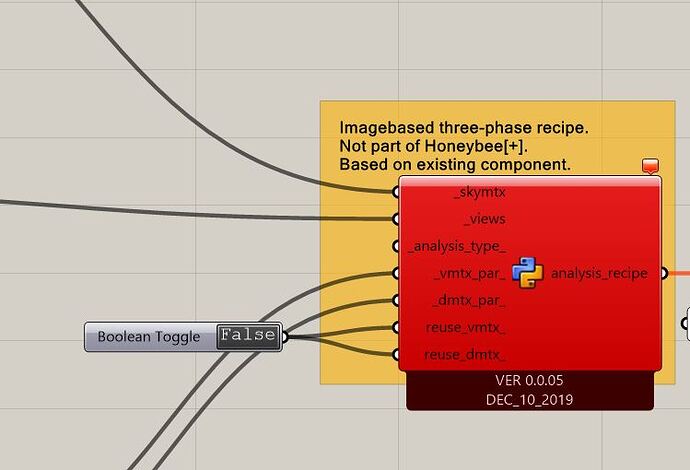

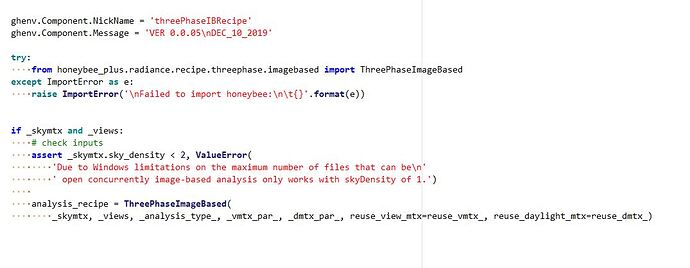

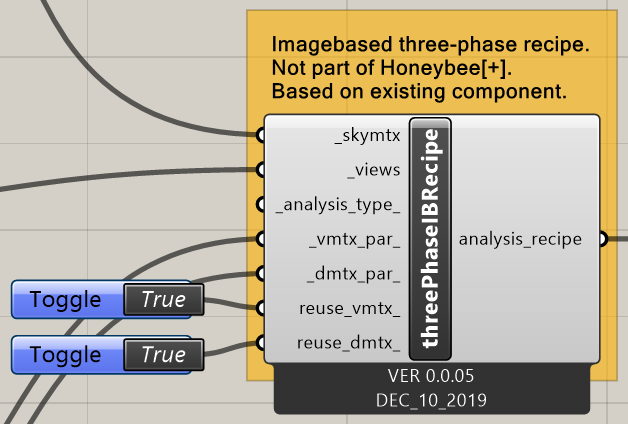

The component for the 3PH imagebased recipe is based on the DC component, only with added input for the view matrix. Unlike the gridbased 3PH, the initial DC calculation with regular glazing surfaces is not included. So, if you have a model with both regular glazing and BSDF-files this will not work – only the window groups with BSDFs will be simulated.

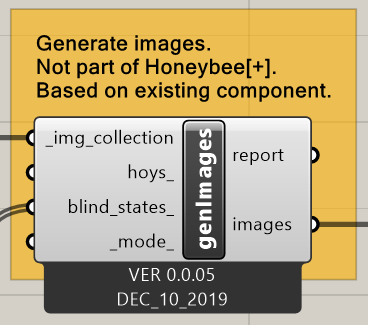

The component for generating combined images is based on the existing component of the same name, genImages. The contribution is not split into total, direct, and sun like the DC recipe, and therefore the input _mode_ doesn’t have any function, though it is still there. The inputs hoys_ and blind_states_ should work like usual – just remember that there is no initial DC simulation meaning that the number of blind state IDs should equal the number of window groups.

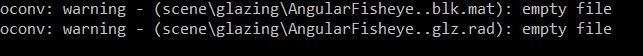

The two components will not work without altering some of the code. There is a new Python file for the recipe (imagebased.py). Otherwise, only two Python files are edited, however, these changes should (hopefully) not interfere with workflows for other recipes.

The animation below illustrates different combination of window groups through a day. The combined images were made with the component shown above, however, the falsecolor images below were created externally through Radiance in command prompt.

Download

If you want to test it you can find a download link here. It includes:

A Rhino model, a Grasshopper file (geometry should be internalised), the BSDFs used in this example, Python files, and details about the code changes in a text document.

Notes

- Since the code has been mostly copy+pasted and edited to fit the recipe, there might be some reduntant code and comments in the Python files.

- A direct skymatrix will be written and appended to commands.bat though it is not used.

- Reusing the matrices should work, however, it only checks if the first image (000.hdr) of the view matrix exists. If you for some reason have deleted any of the other 144 images it will not redo the raytracing for that window group. The DC recipe loops through all the images and also checks the file size, which could be included too.

- Some of the added Python definitions follow a lazy naming convention hence some has been given a “_2” at the end of the existing definition it is based on.

- The workflow worked well with a fresh HB[+] update from around early December. Right now, I think it will still work if you have updated HB[+] recently.